Control theory

Background Information

This Schools selection was originally chosen by SOS Children for schools in the developing world without internet access. It is available as a intranet download. SOS Child sponsorship is cool!

Control theory is an interdisciplinary branch of engineering and mathematics, that deals with the behaviour of dynamical systems. The desired output of a system is called the reference. When one or more output variables of a system need to follow a certain reference over time, a controller manipulates the inputs to a system to obtain the desired effect on the output of the system.

| Wikibooks has a book on the topic of: Control Systems |

Overview

Control theory is

- theory, that deals with influencing the behaviour of dynamical systems

- interdisciplinary subfield of science which originated in engineering and mathematics, and evolved into use by the social sciences, like psychology, sociology and criminology.

An example

Consider an automobile's cruise control, which is a device designed to maintain a constant vehicle speed; the desired or reference speed, provided by the driver. The system in this case is the vehicle. The system output is the vehicle speed, and the control variable is the engine's throttle position which influences engine torque output.

A simple way to implement cruise control is to lock the throttle position when the driver engages cruise control. However, on hilly terrain, the vehicle will slow down going uphill and accelerate going downhill. In fact, any parameter different than what was assumed at design time will translate into a proportional error in the output velocity, including exact mass of the vehicle, wind resistance, and tire pressure. This type of controller is called an open-loop controller because there is no direct connection between the output of the system (the engine torque) and the actual conditions encountered; that is to say, the system does not and can not compensate for unexpected forces.

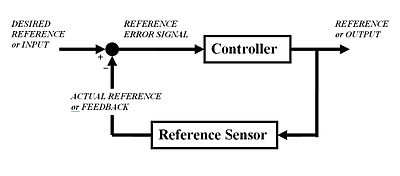

In a closed-loop control system, a sensor monitors the output (the vehicle's speed) and feeds the data to a computer which continuously adjusts the control input (the throttle) as necessary to keep the control error to a minimum (to maintain the desired speed). Feedback on how the system is actually performing allows the controller (vehicle's on board computer) to dynamically compensate for disturbances to the system, such as changes in slope of the ground or wind speed. An ideal feedback control system cancels out all errors, effectively mitigating the effects of any forces that may or may not arise during operation and producing a response in the system that perfectly matches the user's wishes.

History

Although control systems of various types date back to antiquity, a more formal analysis of the field began with a dynamics analysis of the centrifugal governor, conducted by the physicist James Clerk Maxwell in 1868 entitled On Governors. This described and analyzed the phenomenon of "hunting", in which lags in the system can lead to overcompensation and unstable behaviour. This generated a flurry of interest in the topic, during which Maxwell's classmate Edward John Routh generalized the results of Maxwell for the general class of linear systems. Independently, Adolf Hurwitz analyzed system stability using differential equations in 1877. This result is called the Routh-Hurwitz theorem.

A notable application of dynamic control was in the area of manned flight. The Wright Brothers made their first successful test flights on December 17, 1903 and were distinguished by their ability to control their flights for substantial periods (more so than the ability to produce lift from an airfoil, which was known). Control of the airplane was necessary for safe flight.

By World War II, control theory was an important part of fire-control systems, guidance systems and electronics. The Space Race also depended on accurate spacecraft control. However, control theory also saw an increasing use in fields such as economics .

People in systems and control

Many active and historical figures made significant contribution to control theory, for example:

- Alexander Lyapunov (1857-1918) in the 1890s marks the beginning of stability theory.

- Harold S. Black (1898-1983), invented the negative feedback amplifier in the 1930s.

- Harry Nyquist (1889-1976), developed the Nyquist stability criterion for feedback systems in the 1930s.

- Richard Bellman (1920-1984), developed dynamic programming since the 1940s.

- Norbert Wiener (1894-1964) coined the term Cybernetics in the 1940s.

- John R. Ragazzini (1912-1988) introduced digital control and the z-transform in the 1950s.

Classical control theory: the closed-loop controller

To avoid the problems of the open-loop controller, control theory introduces feedback. A closed-loop controller uses feedback to control states or outputs of a dynamical system. Its name comes from the information path in the system: process inputs (e.g. voltage applied to an electric motor) have an effect on the process outputs (e.g. velocity or torque of the motor), which is measured with sensors and processed by the controller; the result (the control signal) is used as input to the process, closing the loop.

Closed-loop controllers have the following advantages over open-loop controllers:

- disturbance rejection (such as unmeasured friction in a motor)

- guaranteed performance even with model uncertainties, when the model structure does not match perfectly the real process and the model parameters are not exact

- unstable processes can be stabilized

- reduced sensitivity to parameter variations

- improved reference tracking performance

In some systems, closed-loop and open-loop control are used simultaneously. In such systems, the open-loop control is termed feedforward and serves to further improve reference tracking performance.

A common closed-loop controller architecture is the PID controller.

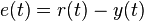

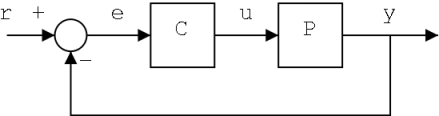

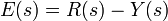

The output of the system y(t) is fed back to the reference value r(t), through a sensor measurement. The controller C then takes the error e (difference) between the reference and the output to change the inputs u to the system under control P. This is shown in the figure. This kind of controller is a closed-loop controller or feedback controller.

This is called a single-input-single-output (SISO) control system; MIMO (i.e. Multi-Input-Multi-Output) systems, with more than one input/output, are common. In such cases variables are represented through vectors instead of simple scalar values. For some distributed parameter systems the vectors may be infinite- dimensional (typically functions).

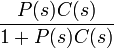

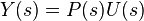

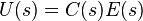

If we assume the controller C and the plant P are linear and time-invariant (i.e.: elements of their transfer function C(s) and P(s) do not depend on time), the systems above can be analysed using the Laplace transform on the variables. This gives the following relations:

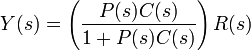

Solving for Y(s) in terms of R(s) gives:

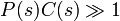

The term  is referred to as the transfer function of the system. The numerator is the forward gain from r to y, and the denominator is one plus the loop gain of the feedback loop. If

is referred to as the transfer function of the system. The numerator is the forward gain from r to y, and the denominator is one plus the loop gain of the feedback loop. If  , i.e. it has a large norm with each value of s, then Y(s) is approximately equal to R(s). This means simply setting the reference controls the output.

, i.e. it has a large norm with each value of s, then Y(s) is approximately equal to R(s). This means simply setting the reference controls the output.

Topics in control theory

Stability

Stability (in control theory) often means that for any bounded input over any amount of time, the output will also be bounded. This is known as BIBO stability (see also Lyapunov stability). If a system is BIBO stable then the output cannot "blow up" (i.e., become infinite) if the input remains finite. Mathematically, this means that for a causal linear system to be stable all of the poles of its transfer function must satisfy some criteria depending on whether a continuous or discrete time analysis is used:

- In continuous time, the Laplace transform is used to obtain the transfer function. A system is stable if the poles of this transfer function lie strictly in the closed left half of the complex plane. i.e. the real part of all the poles is less than zero).

OR

- In discrete time the Z-transform is used. A system is stable if the poles of this transfer function lie strictyly inside the unit circle. i.e. the magnitude of the poles is less than one).

When the appropriate conditions above are satisfied a system is said to be asymptotically stable: the variables of an asymptotically stable control system always decrease from their initial value and do not show permanent oscillations. Permanent oscillations occur when a pole has a real part exactly equal to zero (in the continuous time case) or a modulus equal to one (in the discrete time case). If a simply stable system response neither decays nor grows over time, and has no oscillations, it is marginally stable: in this case the system transfer function has non-repeated poles at complex plane origin (i.e. their real and complex component is zero in the continuous time case). Oscillations are present when poles with real part equal to zero have an imaginary part not equal to zero.

Differences between the two cases are not a contradiction. The Laplace transform is in Cartesian coordinates and the Z-transform is in circular coordinates, and it can be shown that

- the negative-real part in the Laplace domain can map onto the interior of the unit circle

- the positive-real part in the Laplace domain can map onto the exterior of the unit circle

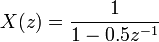

If a system in question has an impulse response of

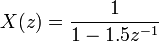

then the Z-transform (see this example), is given by

which has a pole in  (zero imaginary part). This system is BIBO (asymptotically) stable since the pole is inside the unit circle.

(zero imaginary part). This system is BIBO (asymptotically) stable since the pole is inside the unit circle.

However, if the impulse response was

then the Z-transform is

which has a pole at  and is not BIBO stable since the pole has a modulus strictly greater than one.

and is not BIBO stable since the pole has a modulus strictly greater than one.

Numerous tools exist for the analysis of the poles of a system. These include graphical systems like the root locus, Bode plots or the Nyquist plots.

Controllability and observability

Controllability and observability are main issues in the analysis of a system before deciding the best control strategy to be applied, or whether it is even possible to control or stabilize the system. Controllability is related to the possibility of forcing the system into a particular state by using an appropriate control signal. If a state is not controllable, then no signal will ever be able to control the state. If a state is not controllable, but its dynamics are stable, then the state it is termed Stabilizable. Observability instead is related to the possibility of "observing", through output measurements, the state of a system. If a state is not observable, the controller will never be able to determine the behaviour of an unobservable state and hence cannot use it to stabilize the system. However, similar to the stabilizability condition above, if a state cannot be observed it might still be detectable.

From a geometrical point of view, looking at the states of each variable of the system to be controlled, every "bad" state of these variables must be controllable and observable to ensure a good behaviour in the closed-loop system. That is, if one of the eigenvalues of the system is not both controllable and observable, this part of the dynamics will remain untouched in the closed-loop system. If such an eigenvalue is not stable, the dynamics of this eigenvalue will be present in the closed-loop system which therefore will be unstable. Unobservable poles are not present in the transfer function realization of a state-space representation, which is why sometimes the latter is preferred in dynamical systems analysis.

Solutions to problems of uncontrollable or unobservable system include adding actuators and sensors.

Control specifications

Several different control strategies have been devised in the past years. These vary from extremely general ones ( PID controller), to others devoted to very particular classes of systems (especially robotics or aircraft cruise control).

A control problem can have several specifications. Stability, of course, is always present: the controller must ensure that the closed-loop system is stable, regardless of the open-loop stability. A poor choice of controller can even worsen the stability of the open-loop system, which must normally be avoided. Sometimes it would be desired to obtain particular dynamics in the closed loop: i.e. that the poles have ![Re[\lambda] < -\overline{\lambda}](../../images/190/19048.png) , where

, where  is a fixed value strictly greater than zero, instead of simply ask that

is a fixed value strictly greater than zero, instead of simply ask that ![Re[\lambda]<0](../../images/190/19050.png) .

.

Another typical specification is the rejection of a step disturbance; including an integrator in the open-loop chain (i.e. directly before the system under control) easily achieves this. Other classes of disturbances need different types of sub-systems to be included.

Other "classical" control theory specifications regard the time-response of the closed-loop system: these include the rise time (the time needed by the control system to reach the desired value after a perturbation), peak overshoot (the highest value reached by the response before reaching the desired value) and others ( settling time, quarter-decay). Frequency domain specifications are usually related to robustness (see after).

Modern performance assessments use some variation of integrated tracking error (IAE,ISA,CQI).

Model identification and robustness

A control system must always have some robustness property. A robust controller is such that its properties do not change much if applied to a system slightly different from the mathematical one used for its synthesis. This specification is important: no real physical system truly behaves like the series of differential equations used to represent it mathematically. Typically a simpler mathematical model is chosen in order to simplify calculations, otherwise the true system dynamics can be so complicated that a complete model is impossible.

System identification

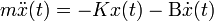

The process of determining the equations that govern the model's dynamics is called system identification. This can be done off-line: for example, executing a series of measures from which to calculate an approximated mathematical model, typically its transfer function or matrix. Such identification from the output, however, cannot take account of unobservable dynamics. Sometimes the model is built directly starting from known physical equations: for example, in the case of a mass-spring-damper system we know that  . Even assuming that a "complete" model is used in designing the controller, all the parameters included in these equations (called "nominal parameters") are never known with absolute precision; the control system will have to behave correctly even when connected to physical system with true parameter values away from nominal.

. Even assuming that a "complete" model is used in designing the controller, all the parameters included in these equations (called "nominal parameters") are never known with absolute precision; the control system will have to behave correctly even when connected to physical system with true parameter values away from nominal.

Some advanced control techniques include an "on-line" identification process (see later). The parameters of the model are calculated ("identified") while the controller itself is running: in this way, if a drastic variation of the parameters ensues (for example, if the robot's arm releases a weight), the controller will adjust itself consequently in order to ensure the correct performance.

Analysis

Analysis of the robustness of a SISO control system can be performed in the frequency domain, considering the system's transfer function and using Nyquist and Bode diagrams. Topics include gain and phase margin and amplitude margin. For MIMO and, in general, more complicated control systems one must consider the theoretical results devised for each control technique (see next section): i.e., if particular robustness qualities are needed, the engineer must shift his attention to a control technique including them in its properties.

Constraints

A particular robustness issue is the requirement for a control system to perform properly in the presence of input and state constraints. In the physical world every signal is limited. It could happen that a controller will send control signals that cannot be followed by the physical system: for example, trying to rotate a valve at excessive speed. This can produce undesired behaviour of the closed-loop system, or even break actuators or other subsystems. Specific control techniques are available to solve the problem: model predictive control (see later), and anti-wind up systems. The latter consists of an additional control block that ensures that the control signal never exceeds a given threshold.

Main control strategies

Every control system must guarantee first the stability of the closed-loop behaviour. For linear systems, this can be obtained by directly placing the poles. Non-linear control systems use specific theories (normally based on Aleksandr Lyapunov's Theory) to ensure stability without regard to the inner dynamics of the system. The possibility to fulfill different specifications varies from the model considered and the control strategy chosen. Here a summary list of the main control techniques is shown:

Classical control

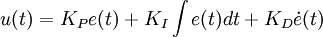

The PID controller is probably the most-used feedback control design, being the simplest one. "PID" means Proportional-Integral-Derivative, referring to the three terms operating on the error signal to produce a control signal. If u(t) is the control signal sent to the system, y(t) is the measured output and r(t) is the desired output, and tracking error  , a PID controller has the general form

, a PID controller has the general form

The desired closed loop dynamics is obtained by adjusting the three parameters  ,

,  and

and  , often iteratively by "tuning" and without specific knowledge of a plant model. Stability can often be ensured using only the proportional term. The integral term permits the rejection of a step disturbance (often a striking specification in process control). The derivative term is used to provide damping or shaping of the response. PID controllers are the most well established class of control systems: however, they cannot be used in several more complicated cases, especially if MIMO systems are considered.

, often iteratively by "tuning" and without specific knowledge of a plant model. Stability can often be ensured using only the proportional term. The integral term permits the rejection of a step disturbance (often a striking specification in process control). The derivative term is used to provide damping or shaping of the response. PID controllers are the most well established class of control systems: however, they cannot be used in several more complicated cases, especially if MIMO systems are considered.

Linear control

For MIMO systems, pole placement can be performed mathematically using a State space representation of the open-loop system and calculating a feedback matrix assigning poles in the desired positions. In complicated systems this can require computer-assisted calculation capabilities, and cannot always ensure robustness. Furthermore, all system states are not in general measured and so observers must be included and incorporated in pole placement design.

Non-linear control

Processes in industries like robotics and the aerospace industry typically have strong non-linear dynamics. In control theory it is sometimes possible to linearize such classes of systems and apply linear techniques: but in many cases it can be necessary to devise from scratch theories permitting control of non-linear systems. These, e.g., feedback linearization, backstepping, sliding mode control, trajectory linearization control normally take advantage of results based on Lyapunov's theory. Differential geometry has been widely used as a tool for generalizing well-known linear control concepts to the non-linear case, as well as showing the subtleties that make it a more challenging problem.

Optimal control

Optimal control is a particular control technique in which the control signal optimizes a certain "cost index": for example, in the case of a satellite, the jet thrusts needed to bring it to desired trajectory that consume the least amount of fuel. Two optimal control design methods have been widely used in industrial applications, as it has been shown they can guarantee closed-loop stability. These are Model Predictive Control (MPC) and Linear-Quadratic-Gaussian control (LQG). The first can more explicitly take into account constraints on the signals in the system, which is an important feature in many industrial processes. However, the "optimal control" structure in MPC is only a means to achieve such a result, as it does not optimize a true performance index of the closed-loop control system. Together with PID controllers, MPC systems are the most widely used control technique in process control.

Adaptive control

Adaptive control uses on-line identification of the process parameters, or modification of controller gains, thereby obtaining strong robustness properties. Adaptive controls were applied for the first time in the aerospace industry in the 1950s, and have found particular success in that field.

Intelligent control

Intelligent control use various AI computing approaches like neural networks, Bayesian probability, fuzzy logic, machine learning, evolutionary computation and genetic algorithms to control a dynamic system

Hierarchical control

A Hierarchical control system is a form of Control System in which a set of devices and governing software is arranged in a hierarchical tree. When the links in the tree are implemented by a computer network, then that hierarchical control system is also a form of Networked control system.

Literature

- Christopher Kilian (2005). Modern Control Technology. Thompson Delmar Learning. ISBN 1-4018-5806-6.

- Vannevar Bush (1929). Operational Circuit Analysis. John Wiley and Sons, Inc..

- Robert F. Stengel (1994). Optimal Control and Estimation. Dover Publications. ISBN 0-486-68200-5, ISBN-13: 978-0-486-68200-6.

- Franklin et al. (2002). Feedback Control of Dynamic Systems (4 ed.). New Jersey: Prentice Hall. ISBN 0-13-032393-4.

- Joseph L. Hellerstein, Dawn M. Tilbury, and Sujay Parekh (2004). Feedback Control of Computing Systems. John Wiley and Sons. ISBN 0-47-126637-X, ISBN-13: 978-0-471-26637-2.

- Diederich Hinrichsen and Anthony J. Pritchard (2005). Mathematical Systems Theory I - Modelling, State Space Analysis, Stability and Robustness. Springer. ISBN 0-978-3-540-44125-0.

- Sontag, Eduardo (1998). Mathematical Control Theory: Deterministic Finite Dimensional Systems. Second Edition. Springer. ISBN 0-387-984895.

![\ x[n] = 0.5^n u[n]](../../images/190/19042.png)

![\ x[n] = 1.5^n u[n]](../../images/190/19045.png)